Posts by valterc

|

1)

Message boards :

Number crunching :

signal 11 (sigsegv) on Vega 20 / ROCM 4.1 / Centos 7

(Message 70831)

Posted 24 May 2021 by  valterc valterc

Post: Dear all, I just tried to run Milkyway in the system described in the title line : Device 'gfx906:sramecc+:xnack-' (Advanced Micro Devices, Inc.:0x1002) (CL_DEVICE_TYPE_GPU) Board: Vega 20 Driver version: 3241.0 (HSA1.1,LC) Version: OpenCL 2.0 Compute capability: 0.0 Max compute units: 60 Clock frequency: 1725 Mhz Global mem size: 34342961152 Local mem size: 65536 Max const buf size: 29191516976 Double extension: cl_khr_fp64but all I got were signal 11 (segmentation violation) coming from libamdocl64.so. Many other OpenCL applications works without a problem (collatz, primegrid, amicable etc.) Does anyone have hints or suggestions? |

|

2)

Message boards :

Number crunching :

R9 290

(Message 61849)

Posted 7 Jun 2014 by  valterc valterc

Post: Yep, no success at all. This may work for 280 (but my one works without it, just with the Catalyst driver) but not for 290.... |

|

3)

Message boards :

Number crunching :

R9 290

(Message 61847)

Posted 6 Jun 2014 by  valterc valterc

Post: I recently got a R9 280-X and it works perfectly with MilkyWay (with the 14.4 driver). I also got a R9 290-X (Hawaii) and it doesn't work at all, MilkyWay claiming that it is a ATI GPU R600 (R38xx) which has no OpenCL support. However it HAS openCL support (Collatz works flawless, and also Gpu-Z confirms this), what it's missing is the CAL support... but anyway it doesn't work with Milkyway and I wanted to use it for this project (yes, I know all issues regarding bad DP performances). Has anyone here had success running an Hawaii GPU? If yes, can you please share some hints? |

|

4)

Message boards :

News :

New MilkyWay Separation Modified Fit Runs

(Message 60522)

Posted 5 Dec 2013 by  valterc valterc

Post: I just checked the credit issue again and found the same behavior as a few weeks ago. Credit is fixed at 213.76 while runtimes vary considerably (on my HD7970) from ~55s (ps_modfit_15_3s_128wrap_3_1382698503_6716313_2) to ~85s (de_modfit_15_3s_bplmodfit_128wrap_1_1382698503_6587065_3). |

|

5)

Message boards :

News :

New MilkyWay Separation Modified Fit Runs

(Message 60263)

Posted 31 Oct 2013 by  valterc valterc

Post: I made some statistics on my 7970 http://milkyway.cs.rpi.edu/milkyway/results.php?hostid=100828 MilkyWay@Home v1.02 (standard) average runtime: 28.27, credit per day: 334,636.29 Milkyway@Home Separation (Modified Fit) v1.28 Here I get two different type of workunit, same credit and runtimes of ~84s or ~55s with the following figures average runtime: 74.03, credit per day: 249,476.42 |

|

6)

Message boards :

News :

Separation updated to 1.00

(Message 52943)

Posted 9 Feb 2012 by  valterc valterc

Post: CAL 12.1, BOINC 6.10.60, 2x HD 6950, no app_info I'm getting tons of work (mixed type, both v1.00 & v0.82). Everything is ok, although the 1.00 wus are a little bit slower, say ~72sec instead of ~68s), is it normal? |

|

7)

Message boards :

Number crunching :

Validate Error

(Message 52774)

Posted 2 Feb 2012 by  valterc valterc

Post: In some cases the stderr from BOINC is truncated or missing completely. I think I mostly fixed the problem for future updates I also have, sometime, this kind of error (empty stderr) <core_client_version>6.10.60</core_client_version> <![CDATA[ <stderr_txt> </stderr_txt> ]]> |

|

8)

Message boards :

News :

Any remaining major credit or application problems?

(Message 49539)

Posted 24 Jun 2011 by  valterc valterc

Post: for me better not in the weekend (going around....) |

|

9)

Message boards :

Number crunching :

Bad WUs

(Message 48740)

Posted 13 May 2011 by  valterc valterc

Post: i just got two of them: de_separation_10_3s_free_2_420330_1305301089_1 de_separation_10_3s_free_2_423122_1305301520_0 the stderr log is the following:

<core_client_version>6.10.60</core_client_version>

< valterc valterc

Post: Please be aware that 11.2 catalyst has some big problems (at least with the 5890) I reinstalled a previous version and everything is running smoothly... |

|

11)

Message boards :

Number crunching :

Strange things on HD5970

(Message 46414)

Posted 3 Mar 2011 by  valterc valterc

Post: Hi all, starting from today I'm having results like this: CAL Runtime: 1.4.1016 Found 2 CAL devices Device 0: ATI Radeon HD5800 series (Cypress) 1024 MB local RAM (remote 2047 MB cached + 2047 MB uncached) GPU core clock: 1000 MHz, memory clock: 1500 MHz 1600 shader units organized in 20 SIMDs with 16 VLIW units (5-issue), wavefront size 64 threads supporting double precision Device 1: ATI Radeon HD5800 series (Cypress) 1024 MB local RAM (remote 2047 MB cached + 2047 MB uncached) GPU core clock: 1000 MHz, memory clock: 1500 MHz 1600 shader units organized in 20 SIMDs with 16 VLIW units (5-issue), wavefront size 64 threads supporting double precision ... WU completed. CPU time: 26.5358 seconds, GPU time: 92.3703 seconds, wall clock time: 94.065 seconds, CPU frequency: 2.80643 GHz ... Validate state Valid Claimed credit 0.154616492621667 Granted credit 213.756235697696 application version MilkyWay@Home v0.23 (ati13ati) Now, two things look strange: a) GPU core and memory frequencies are not the right ones, should be 780/850 according to Afterburner and GPU-Z b) too much CPU time? c) I'm also starting to have 'validate errors'.... this is the host http://milkyway.cs.rpi.edu/milkyway/results.php?hostid=205229 Any hints about this? Thank You |

|

12)

Message boards :

Number crunching :

Testing ATI Application Availability

(Message 35671)

Posted 15 Jan 2010 by  valterc valterc

Post: Thank you all for the hints about installing CAT 9.12 on XP x64, seems working and I am currently crunching (and better at all gettin valid results) MW workunits. However it would be fine to have app_info availabile to fine tune the thing. I'm thinking mainly at the use of the 'w' parameter to reduce screen lag while I use this computer for work. |

|

13)

Message boards :

Number crunching :

Testing ATI Application Availability

(Message 35644)

Posted 14 Jan 2010 by  valterc valterc

Post: Well, I'm not able anymore to crunch MW workunits on ATI 4850.... I did it fine since a couple of days ago (using anonymous platform). Now. I don't think it's a good idea to select CPU only tasks (getting some units to crunch with the GPU) in my preferences and micromanage the whole thing... I also tried to restart BOINC without app_info.xml (this way loosing some important cmdline parameters) and all I got was 1/14/2010 11:58:10 AM Milkyway@home Message from server: No work sent 1/14/2010 11:58:10 AM Milkyway@home Message from server: ATI Catalyst 9.2+ needed to use GPU 1/14/2010 11:58:10 AM Milkyway@home Message from server: Your computer has no NVIDIA GPU I run XP x64 with Catalyst 8.11 (wich is the best one to have on this OS). Hope this will be solved somehow (switching to Collatz for the moment) |

|

14)

Message boards :

Number crunching :

my workunits are all marked invalid...

(Message 34898)

Posted 29 Dec 2009 by  valterc valterc

Post: just to let you know about this. |

|

15)

Message boards :

Number crunching :

Strange things happen (credit)

(Message 32980)

Posted 3 Nov 2009 by  valterc valterc

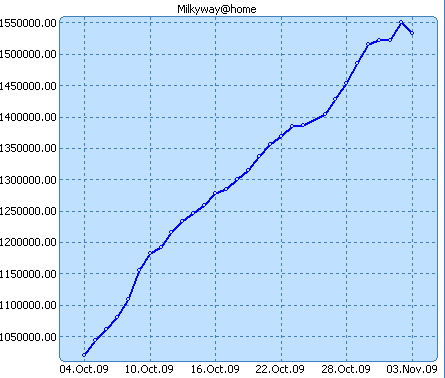

Post: well, look at this:

|

|

16)

Message boards :

Number crunching :

Strange things happen (credit)

(Message 32970)

Posted 3 Nov 2009 by  valterc valterc

Post: All the work done yesterday, I mean all the credits gained yesterday, have beeen lost.... |

|

17)

Message boards :

Number crunching :

GPU only running one wu at a time?

(Message 32969)

Posted 3 Nov 2009 by  valterc valterc

Post: better have <count> set to 0.5 |

|

18)

Message boards :

Number crunching :

Milkyway not running...

(Message 32611)

Posted 21 Oct 2009 by  valterc valterc

Post: DA decided that GPU work should be done in strict FIFO order ... that means that if you want to run MW mostly you need to trim your queue to try to avoid large chunks of Collatz being DL. Of course, the issue here is that you have network drops and lowering the queue means you will run out of work ... I already use app_info, in fact I have a set up that forces CPU only Seti & Primegrid and GPU only for MW & Collatz. The only way I know for having some MW crunching is babysitting the whole thing and suspend Collatz from time to time.... Oh, btw, a couple of days ago I resetted both MW & Collatz after letting them run empty (no new tasks) but I still have the same bahavior.... |

|

19)

Message boards :

Number crunching :

Milkyway not running...

(Message 32601)

Posted 21 Oct 2009 by  valterc valterc

Post: Hi all, Shortly, this is my problem: I would like to run Milkyway for most of the time and use Collatz as a backup project. I do have some network drops and it doesn't reconnect automatically so I really need a backup project. My dual-core runs Collatz, SETI and Primegrid with resource share 100 and MW with resource share 700. However, although the resoruce share is respected by the cpu only projects (SETI & Primegrid) it seems that is ignored by the ATI GPU only projects. Collatz is running almost all the time.... Any hints? BTW, I use client v6.10.13, all but Primegrid with app_info. No problems at all crunching, ie no errors, just the resource share problem. Boinc connects every 0.1 and has an additional work buffer of 4 days. |

|

20)

Message boards :

Number crunching :

6.10.1 Posted.

(Message 30376)

Posted 8 Sep 2009 by  valterc valterc

Post: maybe a little off topic... but, what is the meaning of the two lines ignoring unknown input argument in app_info.xml: --device ignoring unknown input argument in app_info.xml: 0 that started appearing in stderr after upgrading to 6.10.3? |

Next 20

©2024 Astroinformatics Group